The Black Friday Crash

On Black Friday, an e-commerce website faced a disaster. Millions of users rushed to grab deals, but the site slowed down and crashed.

The problem? A single server couldn’t handle the traffic. They needed load balancing to distribute requests efficiently.

Load balancing prevents overload, ensures high availability, and improves response times. Let’s dive in.

So here we are now, with Phase 2 of our Series: Handling Scale & Performance Basics

What is Load Balancing?

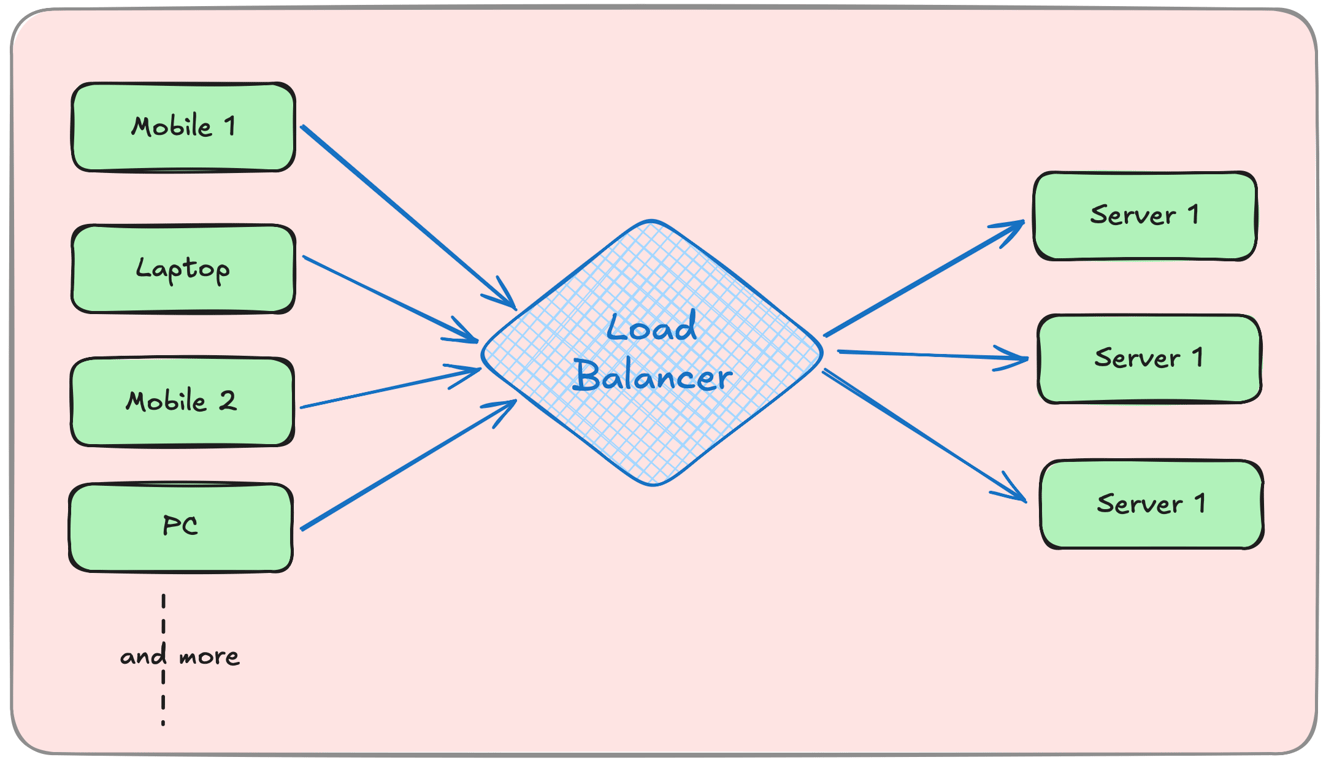

Load balancing is the process of distributing incoming network traffic across multiple servers to ensure no single server is overwhelmed.

It improves:

Scalability – Handles high traffic smoothly.

Reliability – Prevents failures by rerouting traffic.

Performance – Reduces response time by optimising resource usage.

How Load Balancers Work

A load balancer sits between clients and servers, directing requests based on predefined rules.

Types of load balancers:

Hardware Load Balancers – Dedicated physical devices.

Software Load Balancers – Implemented via software (Nginx, HAProxy, AWS ELB).

Load Balancing Algorithms

Different algorithms determine how requests are distributed.

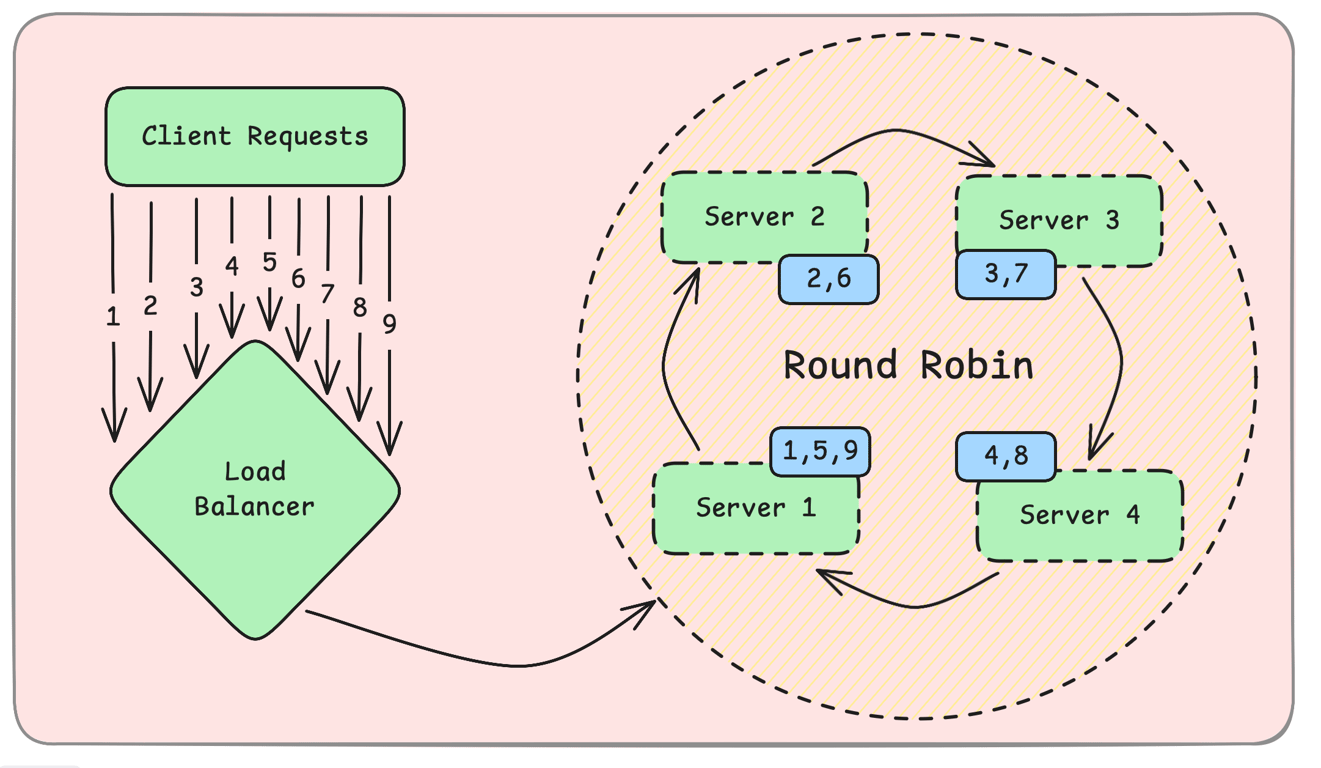

1. Round Robin – Simple and Fair

Each request is sent to the next available server in a cyclic manner.

Example:Request 1 → Server A

Request 2 → Server B

Request 3 → Server C

Request 4 → Server A (cycle repeats)

✔ Easy to implement. ✔ Works well when all servers have equal capacity.

Cons:✖ Doesn't consider server load. ✖ Can overload a slower server.

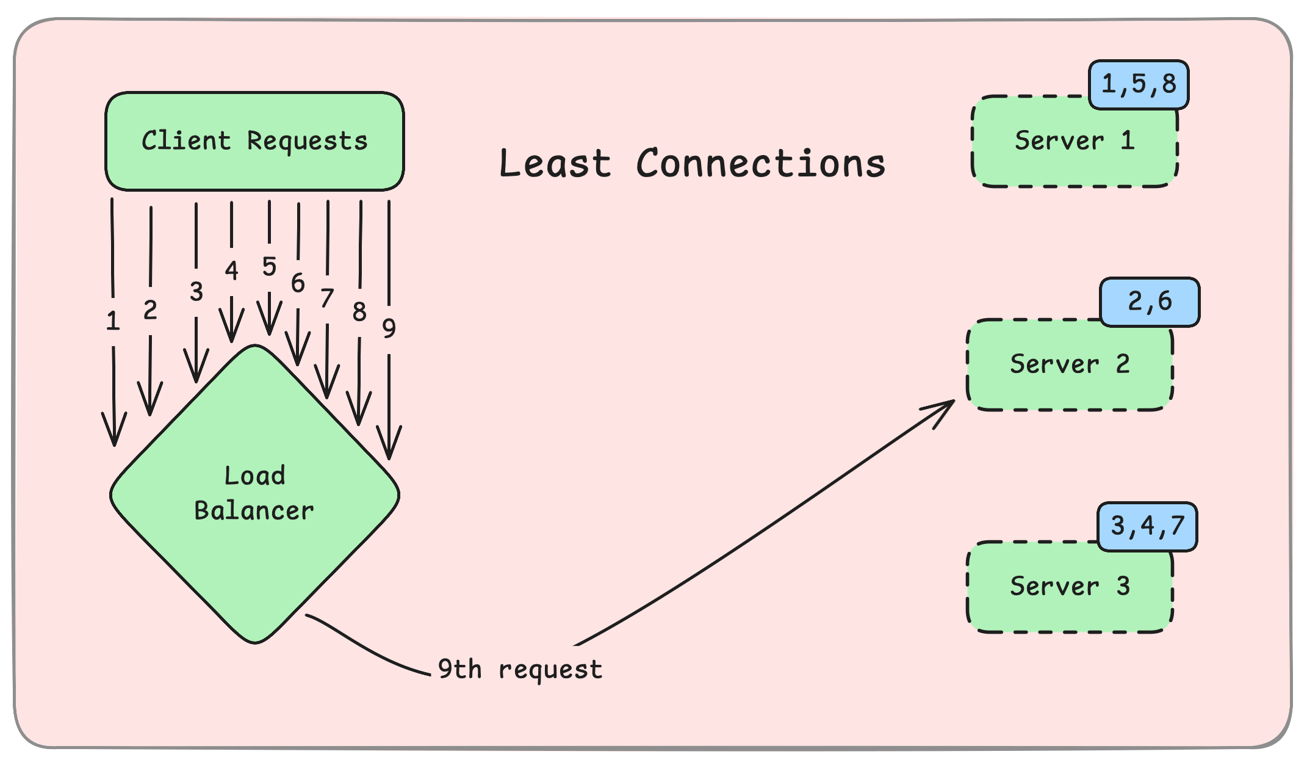

2. Least Connections – Intelligent Distribution

Traffic is sent to the server with the fewest active connections.

Example:Server A (5 active connections)

Server B (2 active connections) ← Next request goes here

Server C (3 active connections)

✔ Balances load dynamically. ✔ Prevents overloading busy servers.

Cons:✖ Slightly higher overhead to track connections. ✖ Not ideal for short-lived connections.

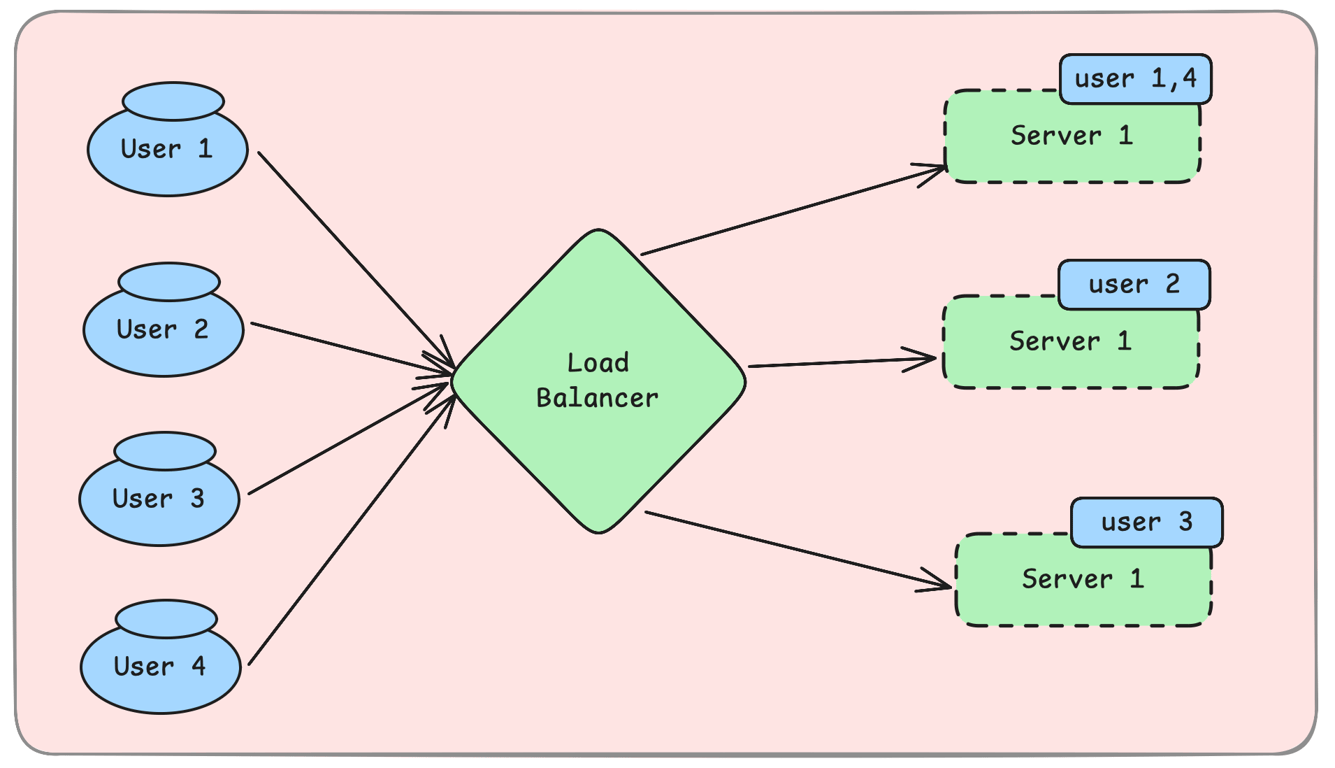

3. Consistent Hashing – Sticky Routing

Requests are routed based on a hash of the client’s IP address or request data. This ensures the same client connects to the same server.

Example:Hash(User 1) → Server A

Hash(User 2) → Server B

Hash(User 3) → Server C

If a server is removed, traffic is rebalanced with minimal disruption.

Pros:✔ Ensures session persistence. ✔ Efficient when servers are added or removed.

Cons:✖ Requires hashing computation. ✖ Needs proper fallback mechanisms.

Real-World Use Cases

1. E-Commerce Websites

Handles sudden traffic spikes, ensuring smooth checkout processes.

2. Streaming Services (Netflix, YouTube)

Distributes video streams efficiently across multiple servers.

3. Online Gaming Platforms

Ensures real-time responsiveness and prevents lag.

Conclusion

Load balancing ensures high availability and optimal performance by distributing traffic efficiently.

Round Robin is simple but doesn’t account for load.

Least Connections dynamically balances requests.

Consistent Hashing provides session persistence.

Next, we’ll explore Caching Basics & Strategies – Why cache? Cache Invalidation, Write-Through, Write-Back.