The Frustrating Delay in Online Orders

Aditi ordered a laptop online. She clicked “Buy Now,” but the page kept loading.

The transaction finally went through, but the delay was annoying. The culprit? High latency.

For systems to perform efficiently, they must optimize latency, throughput, and processing time.

What is Latency?

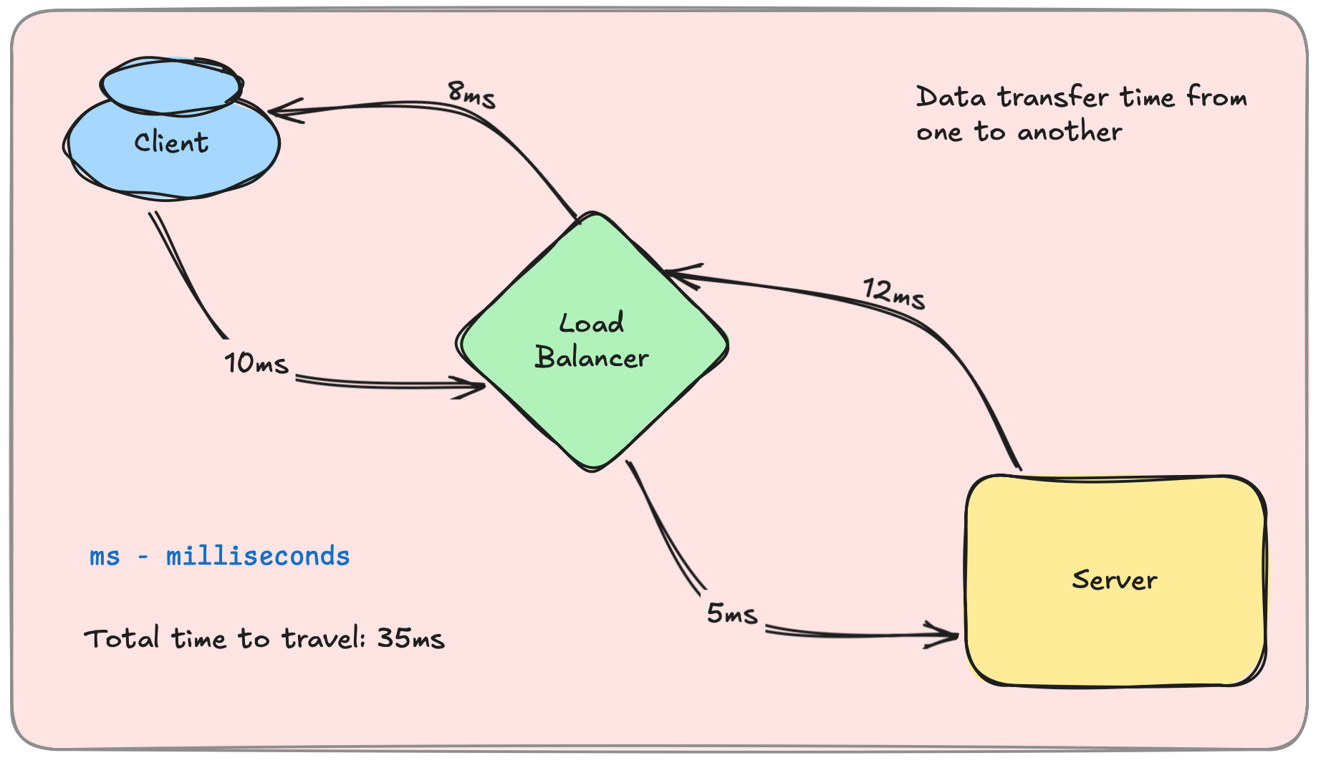

Latency is the time taken for a request to travel from the client to the server and back.

A low-latency system is fast and responsive, while a high-latency system feels sluggish.

Types of Latency

1. Network Latency – The Travel TimeThe time taken for data to move between servers and users.

Affected by distance, network congestion, and routing inefficiencies.

Example: Slow webpage loading due to a high round-trip time (RTT).

2. Processing Latency – The Server’s SpeedThe time taken for a server to process a request and generate a response.

Affected by CPU performance, database queries, and application logic.

Example: A slow SQL query delaying an API response.

What is Throughput?

Throughput is the number of requests a system can handle per second.

A high-throughput system can serve more users efficiently, while a low-throughput system struggles under load.

Example: An API that processes 1,000 requests per second has higher throughput than one handling 100 requests per second.

Optimizing Performance – Reducing Latency & Increasing Throughput

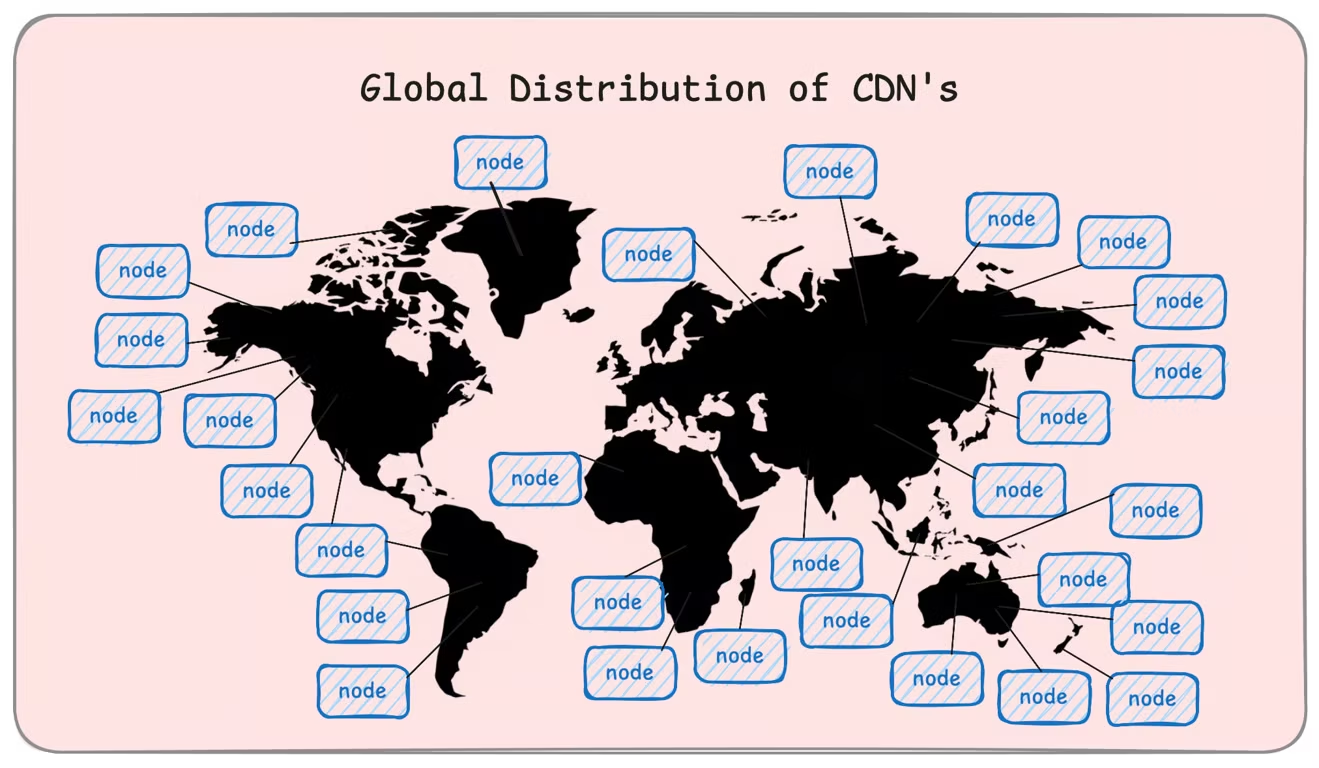

1. Reducing Network Latency

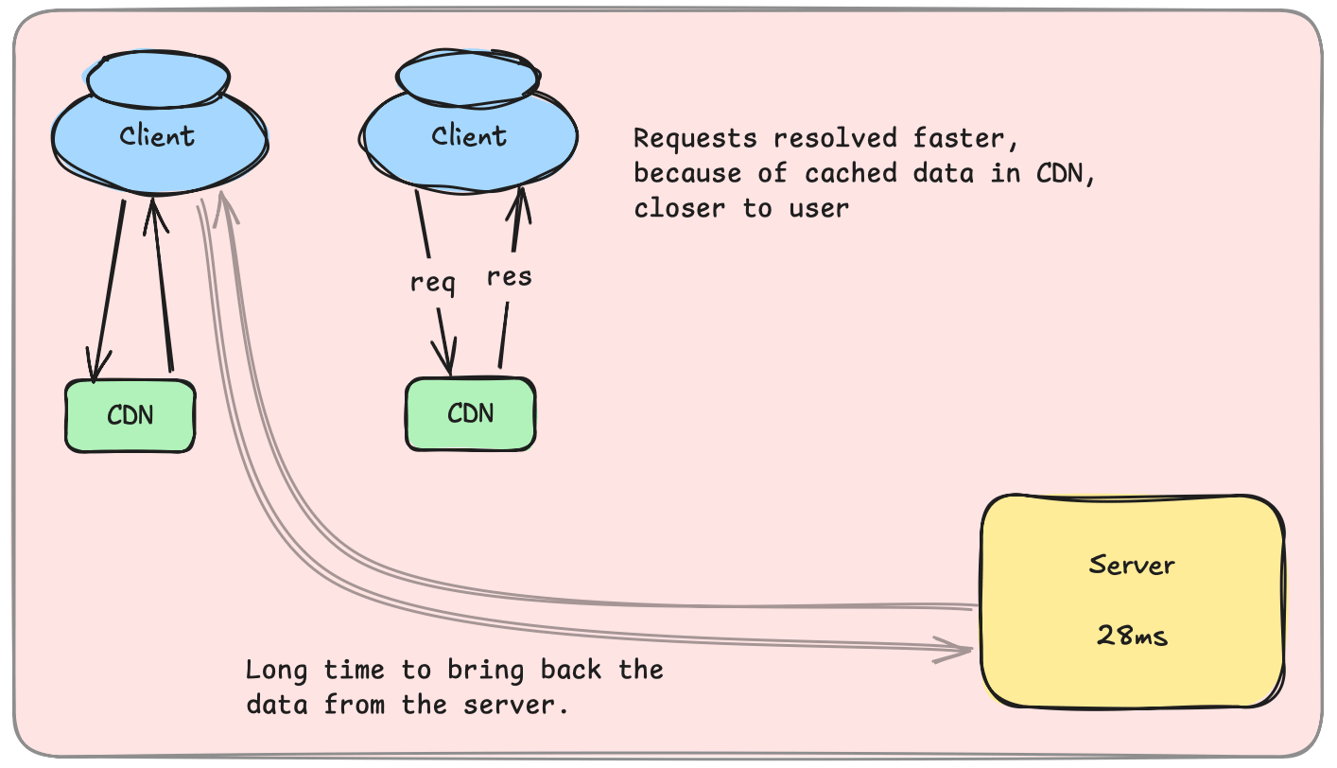

✔ Use Content Delivery Networks (CDNs) to cache data closer to users. ✔ Optimize DNS resolution to reduce lookup delays. ✔ Implement compression (Gzip, Brotli) to shrink payload sizes.

Example: Netflix uses CDNs to serve videos faster worldwide.

2. Optimizing Processing Latency

✔ Use efficient indexing to speed up database queries. ✔ Implement caching (Redis, Memcached) to reduce database hits. ✔ Optimize server-side code and eliminate bottlenecks.

Example: E-commerce sites cache product details to reduce database queries.

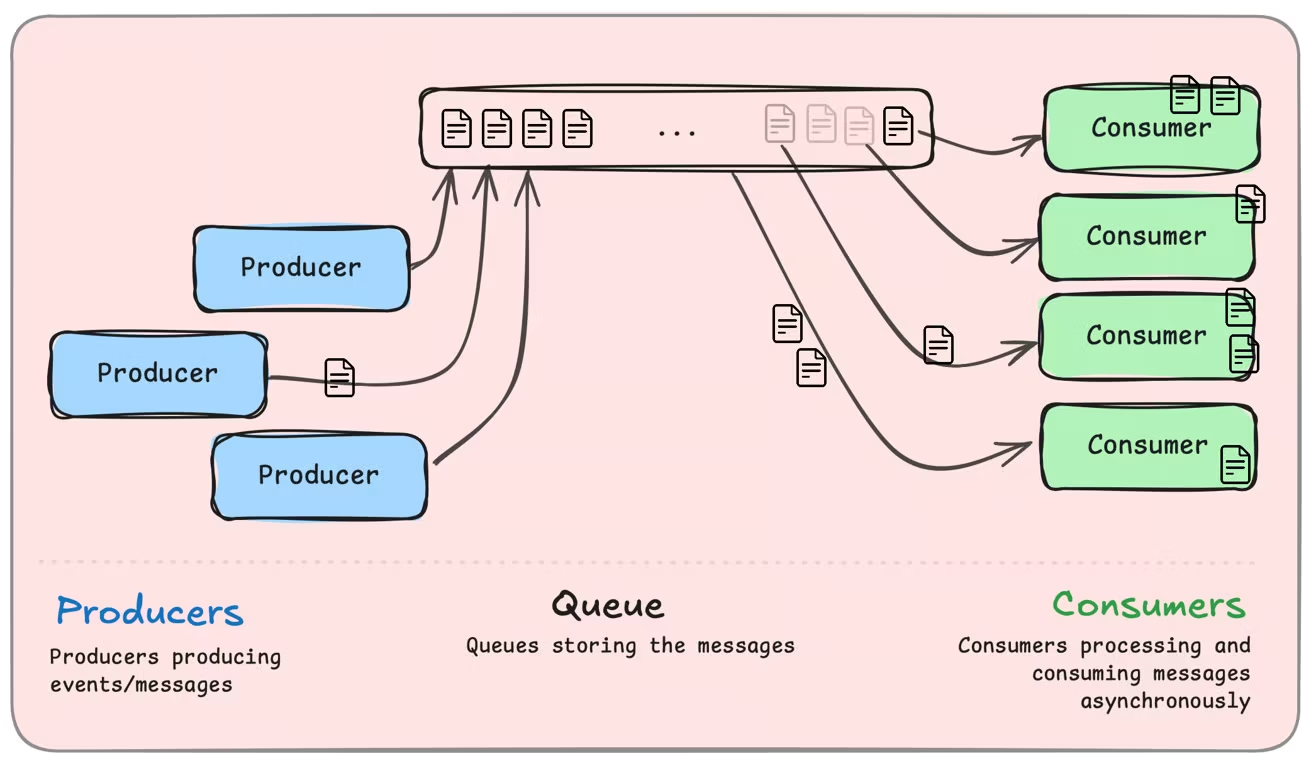

3. Improving Throughput

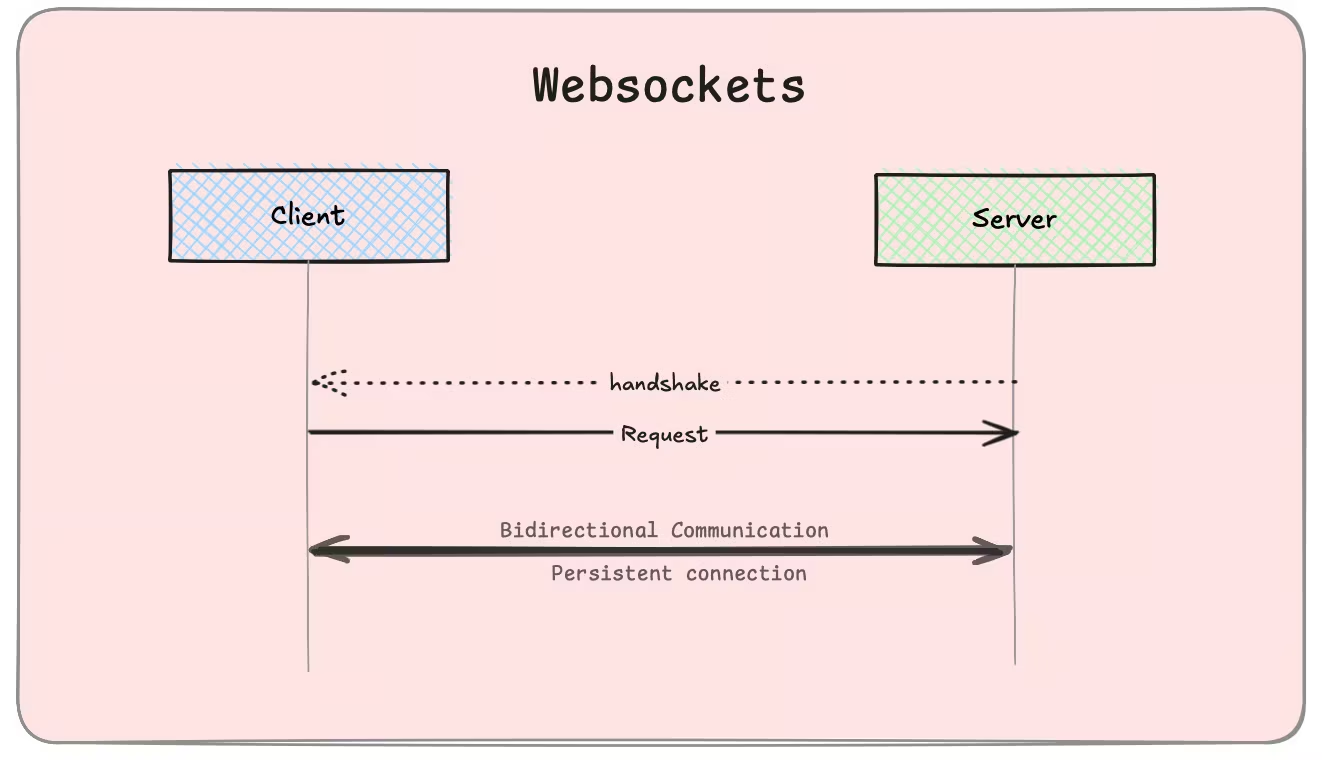

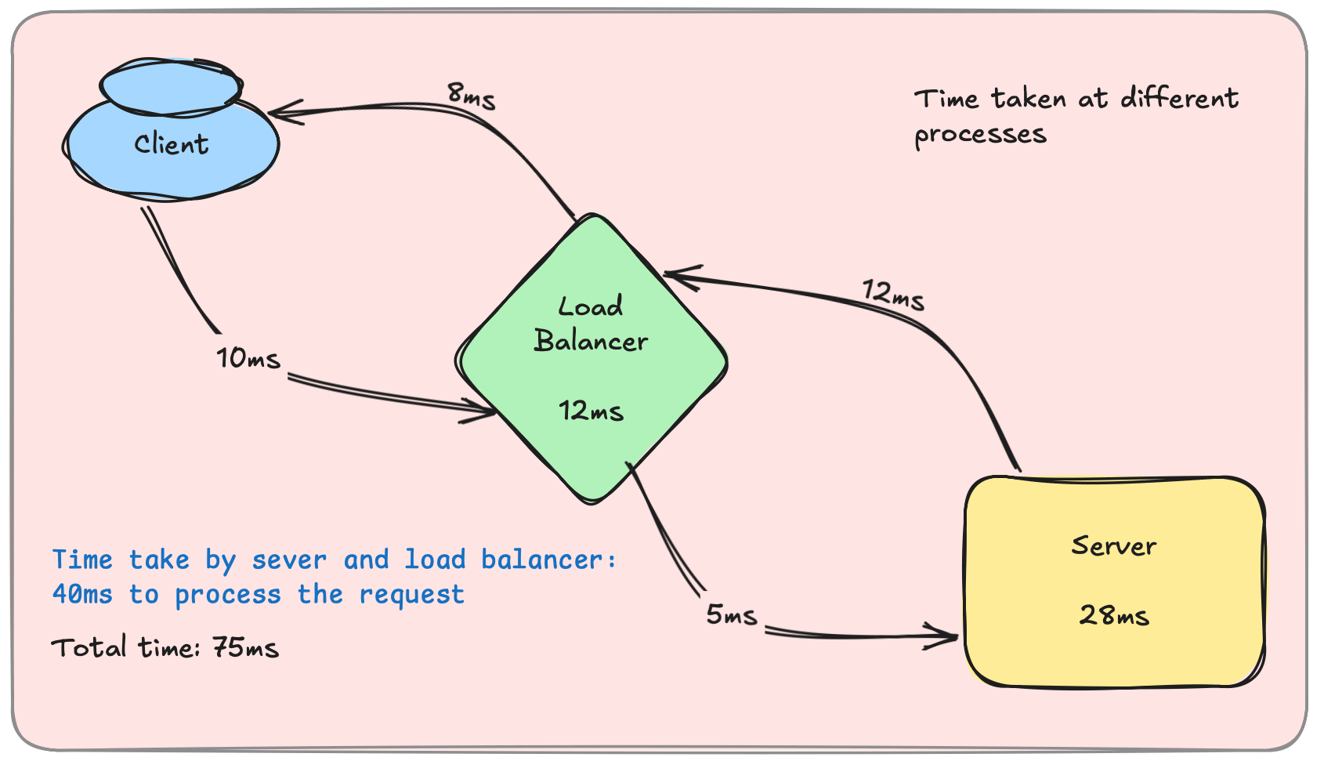

✔ Implement load balancing to distribute requests across multiple servers. ✔ Use asynchronous processing to handle background tasks efficiently. ✔ Scale horizontally with auto-scaling clusters.

Example: Twitter handles millions of requests per second using load-balanced microservices.

Real-World Use Cases

1. Streaming Services (Netflix, YouTube)

Reduce network latency using CDNs.

Optimize throughput with high-bandwidth servers.

2. Financial Transactions (Banking, Trading Apps)

Use low-latency networks to execute transactions in milliseconds.

Process requests efficiently using optimized database queries.

3. Online Gaming (Multiplayer Games)

Minimize lag by reducing network latency.

Ensure high throughput for real-time gameplay.

Conclusion

For a fast, scalable system:

Reduce network latency using CDNs and compression.

Minimize processing latency with caching and optimized queries.

Increase throughput by load balancing and scaling.

Next, we’ll explore Message Queues & Event-Driven Architectures – Kafka, RabbitMQ, SQS, Web hooks. Be prepared for that.